For years, developers and tech leads have enjoyed a certain level of predictability with AI coding assistants. You sent a message, you got a fix, and the cost was a known variable. But as of April 2, 2026, the rules of the game have fundamentally changed.

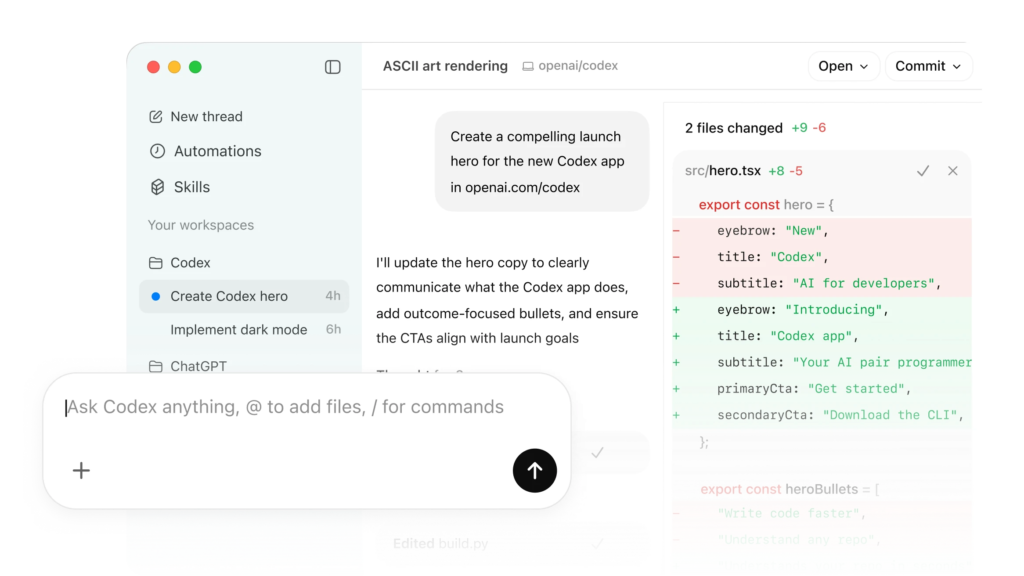

OpenAI has officially pulled the plug on its legacy “per-message” billing for Codex, its flagship AI agent. In its place stands a new, more granular architecture: token-based pricing. While this shift is rolling out initially to Business and Enterprise clients—with Plus and Pro users soon to follow—it marks a definitive end to the “all-you-can-eat” buffet of AI development.

This isn’t just a minor administrative update; it is a strategic pivot. By indexing costs to actual consumption—differentiating between input, output, and cached tokens—OpenAI is signaling a move toward a “usage-based reality.” For the first time, the true computational cost of refactoring a massive codebase is being passed directly to the user.

Whether this represents a win for transparency or a looming “bill shock” for engineering departments remains to be seen. One thing is certain: in the high-stakes race against competitors like Anthropic, the era of subsidized, unlimited AI interactions is over. Welcome to the age of the metered IDE.

The Shift: From “Messages” to “Tokens”

Until recently, Codex operated on a “per-message” basis. It was a democratic, if somewhat inefficient, system: whether you asked the AI to write a simple “Hello World” script or requested a complex refactor of a 50-file microservice, the “cost” to your plan was the same. While this offered great budget predictability, it meant that light users were effectively subsidizing the heavy computational demands of power users.

The New Way: Granular Metering

The new model treats AI usage like a utility, similar to electricity or water. You are now billed based on the exact volume of data processed, broken down into three distinct categories:

- Input Tokens: The data you send to the model (your prompts, existing code, and instructions).

- Cached Input: A “discounted” category for data the model has recently seen, rewarding efficient developers who stay within the same context.

- Output Tokens: The code the AI actually generates. At $14.00 per million, these are the “premium” tokens, reflecting the high GPU power required to write new logic from scratch.

The Context Window Factor: A 400,000-Token Double-Edged Sword

One of the most impressive—and potentially dangerous—features of the new GPT-5.3-Codex is its massive 400,000-token context window.

To put that in perspective, you can now feed the model entire libraries or massive chunks of your codebase in a single go. While this allows for unprecedented architectural awareness and accuracy, it also means a single “Oops” moment—like accidentally attaching a massive dependency folder to your prompt—could process hundreds of thousands of input tokens in seconds.

4. Crunching the Numbers: What Does it Actually Cost?

For engineering managers and CFOs, the shift to token-based billing means the “black box” of AI spending is finally being opened. However, transparency comes with a variable price tag. To keep your budget under control, you need to understand the specific rates applied to the new GPT-5.3-Codex model.

The pricing structure reflects the technical reality of LLMs: it is significantly “cheaper” for the AI to read your code (Input) than it is to think and write new logic (Output).

GPT-5.3-Codex Pricing Reference

| Metric | Rate / Description |

|---|---|

| Input Tokens | $1.75 per million tokens |

| Output Tokens | $14.00 per million tokens |

| Context Window | Up to 400,000 tokens |

| Fast Mode | 2x Credit Consumption (Double the cost) |

| Average Estimated Cost | $100 – $200 per developer / month |

Breaking Down the “Hidden” Costs

While the rates per million tokens might look small, they scale rapidly in a professional environment. Here are the three factors that will determine where you land on the $100–$200 monthly estimate:

- The Output Premium: Notice that Output tokens are 8 times more expensive than Input. If you use Codex to generate massive boilerplate files or entire modules from scratch, your “token burn” will be significantly higher than if you use it for code reviews or debugging.

- The “Fast Mode” Penalty: In the heat of a deadline, it’s tempting to leave “Fast Mode” on. However, OpenAI has confirmed that this doubles the credit debit. For a team of 50 developers, leaving Fast Mode on by default could be the difference between a $5,000 and a $10,000 monthly bill.

- Variance in Usage: OpenAI explicitly notes that these averages are just that—averages. A developer performing simple unit test generation will cost the company pennies, while a lead architect using the full 400k context window to refactor a legacy monolith could easily exceed the $200 mark.

Pro Tip: Keep an eye on your Cached Input. While not explicitly priced in every tier yet, utilizing cached tokens is the most effective way to keep “Input” costs low when working on the same file over a long session.

5. Winners vs. Losers: The Economic Impact

The move to token-based billing creates a new economic landscape in the dev world. It effectively ends the “cross-subsidization” era, where low-intensity users were paying the same as those running massive automated scripts. As with any shift to usage-based pricing, there are clear winners and losers.

The Winners: The “Surgical” Coders

For the developer who uses AI as a precision tool rather than a constant co-pilot, this change might actually be a financial win—or at least a neutral shift.

- Small-Scale Projects: If you are working on modern, modular codebases with small file sizes, your “Input” costs remain negligible.

- Precision Prompting: Developers who write targeted queries (e.g., “Optimize this specific 20-line function”) rather than dumping entire modules into the prompt will see very low credit consumption.

- The “Snippeters”: Those who use Codex primarily for syntax reminders or generating small unit tests will find that a few dollars goes a very long way under the $1.75/million token rate.

The Losers: The “Heavy Lifters” and Automation Addicts

On the other side of the spectrum, certain workflows are about to become significantly more expensive. For these “Power Users,” the days of $20/month flat-fees are a distant memory.

- Legacy Monoliths: If you’re working on a “God Object” or a legacy file with 10,000 lines of code, simply opening that file with Codex active will burn through tokens. Every time the AI “reads” the file to provide context, the meter runs.

- Autonomous Agents: This is the biggest “at-risk” group. Agents that run in loops—scanning entire repositories to find bugs, document code, or refactor architecture—can consume millions of tokens in a single afternoon. Under the new pricing, a single “unsupervised” agent run could cost more than a developer’s entire monthly seat used to.

- The “Fast Mode” Loyalists: Teams that have integrated Codex into their CI/CD pipelines or real-time pair programming with “Fast Mode” permanently toggled on will see their costs double instantly, making “speed” a high-priced luxury.

The Bottom Line

We are moving toward a world where Context Efficiency is a new technical skill. Developers who know how to manage their “context window” will be seen as budget-conscious assets, while those who carelessly flood the model with data may find themselves answering to the finance department.

7. Optimization Tips: How to Lower the Bill

As the saying goes, “What gets measured gets managed.” Now that Codex is metered, the most successful developers will be those who treat their token usage with the same efficiency they apply to their code. Here is how to keep your development costs from spiraling.

1. Master Context Management

The 400,000-token window is a powerhouse, but it’s also a potential money pit. In a token-based world, “loading everything” is a legacy habit that needs to die.

- Be Selective: Only feed the specific files or modules relevant to your task. If you’re debugging a React component, you don’t need to send the model your entire Python backend folder.

- The “Context Hygiene” Rule: Start fresh conversations frequently. Long threads carry the “baggage” of every previous message in the history, exponentially increasing the input tokens for every new request.

2. Leverage Prompt Caching

OpenAI’s new system rewards repetition through Prompt Caching. When you reuse a large chunk of text (like a system prompt or a standard codebase header) across multiple requests, you can get a massive discount (often up to 90% off the standard input rate).

- Order Matters: To hit the cache, keep your static content (instructions, core documentation) at the beginning of your prompt. Put the variable, ever-changing part of your code at the end.

- Stability: Avoid adding dynamic data like timestamps or unique session IDs to the start of your prompts, as this breaks the cache and forces you to pay full price for the entire block.

3. “Mode Awareness”: Fast vs. Standard

The Fast Mode is essentially a productivity tax. Since it consumes double the credits, it should be treated as a strategic resource rather than a default setting.

- Standard Mode: Use this for 90% of your day—routine boilerplate, documentation, or basic refactoring.

- Fast Mode: Reserve this for complex architectural problems, high-pressure debugging during a “site down” emergency, or when you are genuinely blocked by the model’s reasoning speed.

4. Use the “Right Model” for the Task

Not every bug requires the full power of GPT-5.3-Codex.

- For simple tasks like writing docstrings, formatting code, or generating basic unit tests, consider switching to “Mini” models if available in your tier. These models often process tokens at a fraction of the cost, stretching your monthly budget significantly further.

Final Tip: Check your OpenAI dashboard weekly. Setting up budget alerts and “soft limits” is no longer just for API users; it’s a necessary safety net for every Business and Enterprise team to avoid “bill shock” at the end of the month.

Conclusion: The Professionalization of AI Development

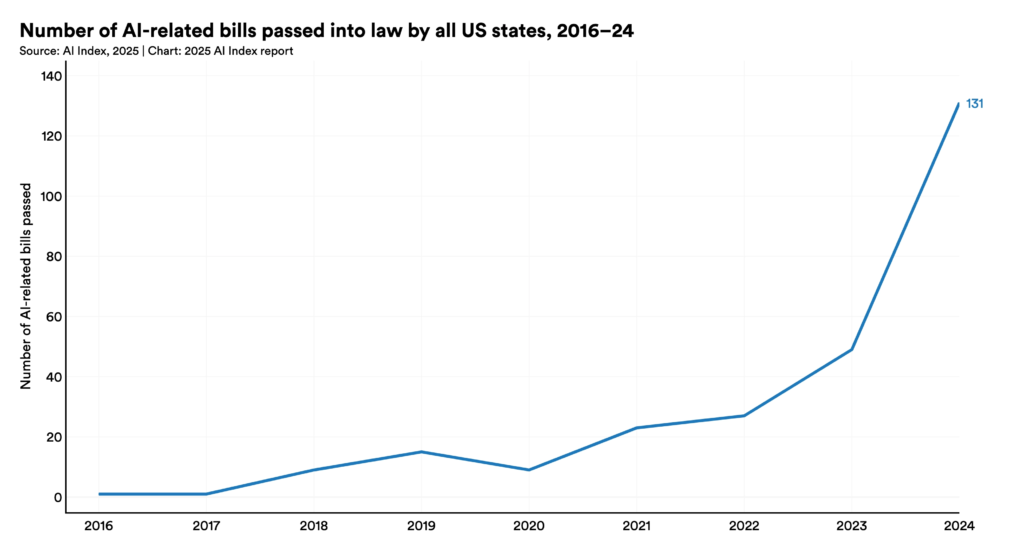

The shift from per-message to token-based billing isn’t just a minor line-item change on an invoice; it’s a clear signal that the AI industry is maturing. For years, OpenAI and its competitors focused on growth at all costs, often subsidizing heavy users to build habits and collect data. Those days are officially over.

By moving to this granular model, OpenAI is aligning itself with the reality of cloud computing. Much like AWS or Google Cloud, you now pay for the exact “compute” you consume. While this might feel like a burden to power users, it introduces a necessary level of transparency. We are entering an era where “Prompt Engineering” is evolving into “Token Engineering”—where the efficiency of your context management is just as important as the quality of your code.

The Bigger Picture

This move is also a strategic counter-strike. With Anthropic recently surging past OpenAI in annualized revenue—hitting a staggering $30 billion run rate by leaning heavily into enterprise contracts—OpenAI has no choice but to prove its business model is sustainable. By charging “heavy lifters” their fair share, OpenAI is shoring up its finances to continue the development of even more powerful models like GPT-5.4.

What’s Next for You?

For the individual developer or the small startup, the impact of these changes will likely be minimal. But for enterprise leaders, now is the time to audit your AI workflows.

- Are your automated agents running in infinite loops?

- Is your team habitually pasting entire repositories into prompts?

- Are you paying for “Fast Mode” speed that you don’t actually need?

The tools are more powerful than ever, but the “Open Bar” is closed. As we move further into 2026, the most successful engineering teams won’t just be the ones using the best AI—they’ll be the ones using it the most efficiently.